This part is totally up to you, as are the naming and the variables. We have also decided to deploy our external-dns in the kube-system namespace. You may not have the same naming or tagging policy, our purpose here is not to convince you to use ours, just to provide a possible solution. We use the module outputs in our resources, and we format our OIDC URL to use it as expected by the providers. We deploy our Kubernetes cluster with the EKS Terraform module like this to let external-dns create DNS records in Route53 but only for specific zones.to be able to integrate with the alb-ingress-controller.a well-maintained Helm chart to deploy external-dns.

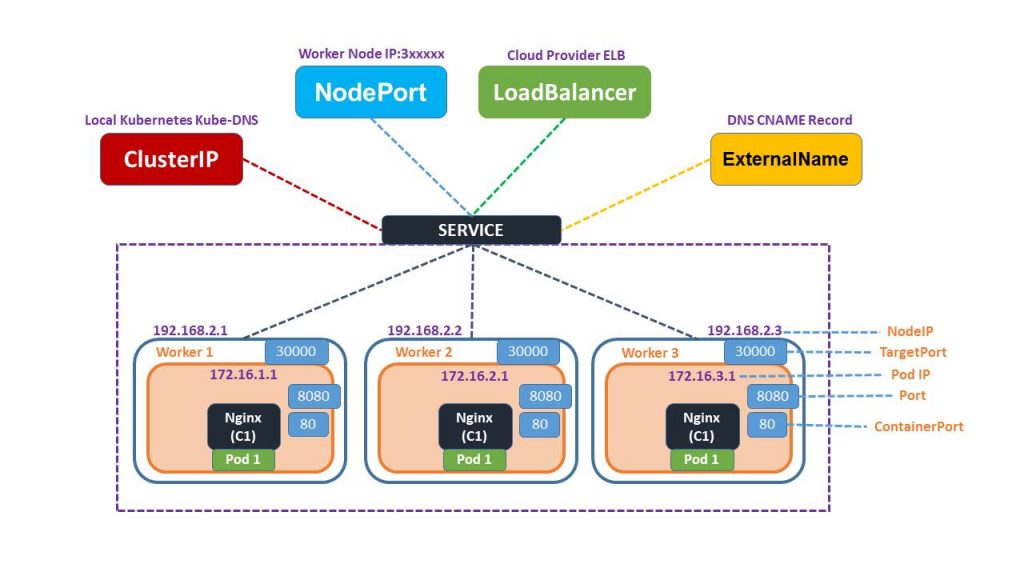

to deploy external-dns with the Helm Terraform provider within the same Terraform module that deploys my EKS cluster.I would also prefer not to redesign half our infrastructure stack just to add my DNS brick. Full compatibility with my colleagues' work is a requirement: they did a great work and already deployed a lot of stuff!įor instance, we have an alb-ingress-controller in our Kubernetes cluster to create load balancers: I can't decide to change variables used by this piece if I need other values in my DNS stack.Īlso, I need to come up with a solution that allows piece-by-piece migration: we want to migrate our DNS management deployment by deployment, as per the SRE/devops motto "atomic changes, often". I can't make breaking changes to the infrastructure just because I add a DNS stack. This was not usable, and we needed an other solution. We can't abandon our good old datacenters and the associated DNS infrastructure: a lot of applications and clients are still using it, and the legacy yak-shaving is never a quick journey. The actual DNS records that our clients use to access our applications are not managed in AWS Route53, and that will not change until we finish our migration to AWS. It reads a custom tag attached to the created ALB, containing the ALB's DNS name, and creates a DNS record if it did not exist.įurther complicating matters, those DNS records are merely intermediary. Until recently we used a lambda function triggered by a cloudwatch event when we create an ALB. Our ingress is managed by an alb-ingress-controller, which creates an application loadbalancer in our AWS account with all the ingresses we need.īut when a loadbalancer is online, it needs a DNS name, and AWS does not allow an ALB to create DNS records itself on Route53. To deploy our apps, we instantiate a Helm chart with the appropriate values in our Kubernetes cluster.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed